Introduction

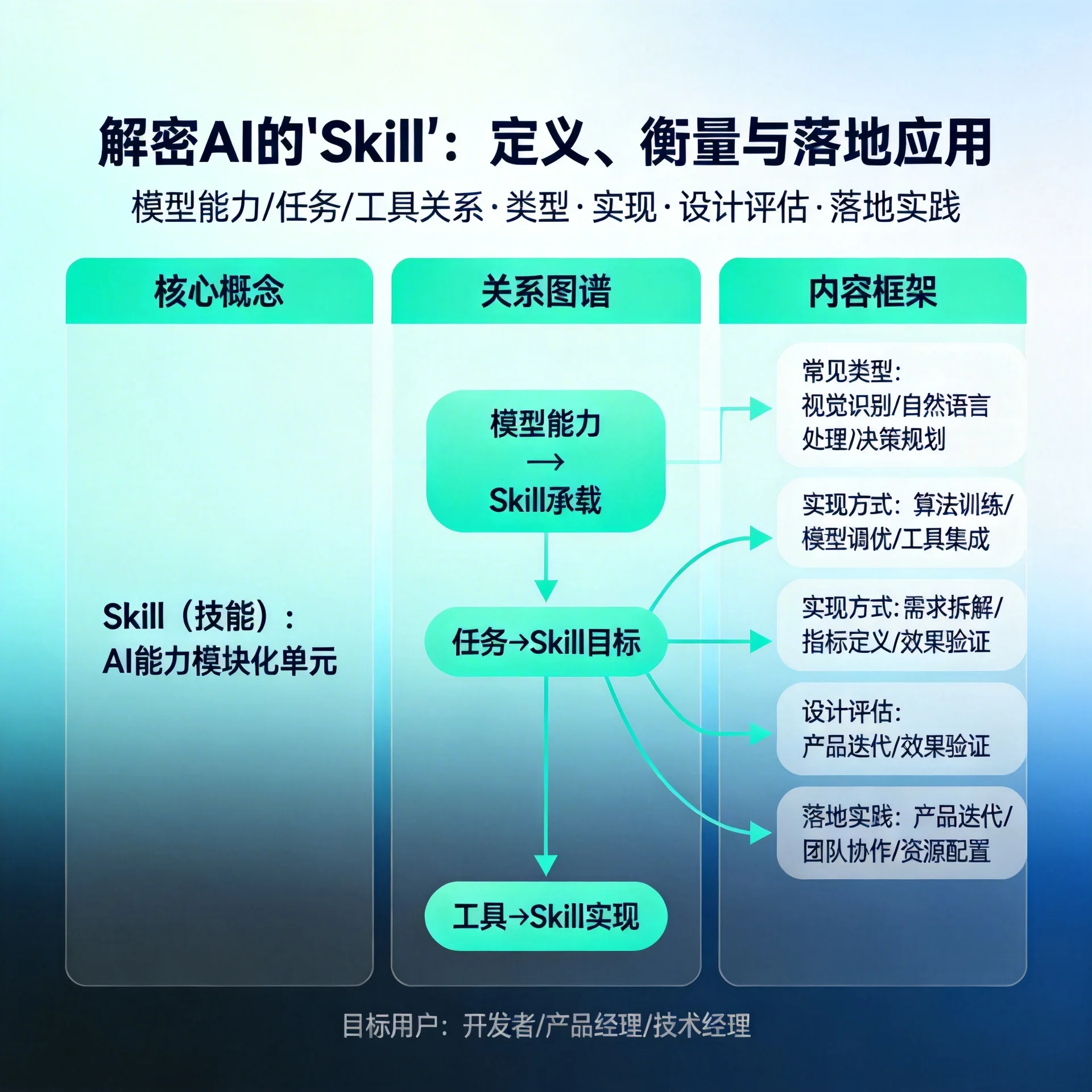

In recent years, when discussing AI capabilities we often see terms like "skill", "capability module", and "tool". Especially in productized, pipeline-driven, and multimodal scenarios, breaking AI capabilities into composable, reusable "skills" has become an important paradigm. This article aims to systematically explain "what an AI skill is", how it differs from and relates to models, tasks, and tools, and how to design, evaluate, and deploy them.

What is an AI skill?

-

Definition: A skill is an abstract description of a specific capability or function that an AI can perform, typically defined with clear input/output, constraints, interfaces, and evaluation metrics. It is neither a pure model nor a pure product feature, but a reusable capability unit that lies between the two.

-

Examples: Text summarization, question-answering retrieval, image annotation, code generation, sentiment analysis, mathematical reasoning, data cleaning, etc., can all be defined as different skills.

-

Difference from "task": A task tends to be oriented toward a specific business scenario (for example, "automated customer service replies"), whereas a skill is more of a functional capability (for example, "retrieve relevant paragraphs from a knowledge base"). A task may be composed of multiple skills.

-

Difference from "tool": A tool emphasizes an externally callable interface (for example, a search API, database, or calculator). A skill can encapsulate calls to external tools or rely solely on the model's internal capabilities.

Common types of skills

- In-model skills: Rely on the model's own reasoning and generation capabilities (e.g., translation, summarization).

- Tool orchestration skills: Wrappers responsible for calling external APIs (retrieval, computation, browser queries).

- Data processing skills: Cleaning and formatting inputs/outputs (e.g., table parsing, date normalization).

- Multi-step/chain skills: Combine multiple skills sequentially or in parallel to complete complex tasks (e.g., retrieval → summarization → formatting).

How to design a good skill

- Clear contract: Define input schema, output schema, boundary conditions, and error handling.

- Measurable metrics: Determine evaluation dimensions like accuracy, recall, latency, and stability.

- Composability: Ensure uniform interfaces and clear semantics to facilitate reuse in higher-level tasks.

- Observability: Logs, monitoring, input/output examples, and failure case records.

Example (pseudo-JSON description):

{

"name": "document_retrieval",

"input": {"query": "string", "top_k": "int"},

"output": {"documents": "array"},

"metrics": ["recall@k","latency_ms"]

}

How to evaluate a skill

- Unit testing: Perform offline evaluation with standardized datasets and boundary samples.

- End-to-end evaluation: Monitor the impact of the skill on final business metrics in real workflows (e.g., user satisfaction, task completion rate).

- Online experiments: A/B test different skill implementations or parameters to observe differences in conversions or user behavior.

- Robustness and safety testing: Detect adversarial examples, anomalous inputs, and abuse scenarios.

Skill composition and orchestration

In practice, complex tasks often require combinations of multiple skills. Orchestration strategies include:

- Serial orchestration (chain): The output of one skill is the input to the next, suitable for linear workflows.

- Parallel and aggregate (parallel + aggregator): Call multiple skills in parallel and then merge results, suitable for multi-source evidence.

- Conditional branching (router): Select different skill paths based on input features.

The key is to handle interface compatibility, error propagation, and timeout strategies.

Practical recommendations and deployment considerations

- Start by splitting core capabilities: Prioritize abstracting capabilities that have the greatest business impact and high reusability into skills.

- Build an evaluation pipeline: Cover offline, online, and human-review layers for rapid iteration.

- Balance local capabilities and external tools: Keep latency-sensitive or sensitive data in controlled environments; place non-sensitive or frequently updated information in external retrieval/browser tools.

- Define fallback strategies: Have fallback logic when a skill fails (for example, simple template replies or human takeover).

- Documentation and governance: Clarify capability boundaries, privacy policies, and compliance requirements.

Impact on product and team

Modularizing capabilities into skills helps team division of labor (model teams, engineering teams, product teams each focusing on their area), accelerates reuse, and speeds up iteration. However, it also introduces complexities in version management, interface compatibility, and multi-skill coordination, which require governance strategies and automated pipelines to support.

Summary

An AI skill is not a single concept; it is more like an engineering- and product-oriented capability abstraction: clear contract, measurable, composable, and observable. Grasping the boundaries and design principles of skills allows AI capabilities to more reliably serve real business needs. In practice, start from core capabilities, emphasize evaluation and fallback strategies, and maximize skill value through good documentation and governance.

If you are building or evaluating AI capability modules, start with a small-scale skill abstraction: define the interfaces, test thoroughly, deploy and observe, then gradually expand composition and optimization.